Shadow Ai Identityshield

AI Generated Summary

AI Generated Content Disclaimer

Note: This summary is AI-generated and may contain inaccuracies, errors, or omissions. If you spot any issues, please contact the site owner for corrections. Errors or omissions are unintended.

This presentation at Identity Shield, Pune explores Shadow AI as the newest supply chain disruptor. Anant Shrivastava examines how the rapid proliferation of AI tools across organizations — often adopted without IT or security team oversight — creates a new class of shadow technology risk that intersects with software supply chain security, identity management, and data governance. The talk covers shadow AI risk classification, discovery approaches, and a balanced “carrots and sticks” strategy for organizational adoption, framed within an operational loop for continuous management.

Key Topics Covered

The Changed Landscape:

- The world of software development and IT has fundamentally shifted with the rise of AI-driven tools

- AI tools are being adopted across organizations at a pace that outstrips traditional security and governance processes

- This creates a new category of risk that extends beyond traditional Shadow IT

Shadow AI as a Supply Chain Vector:

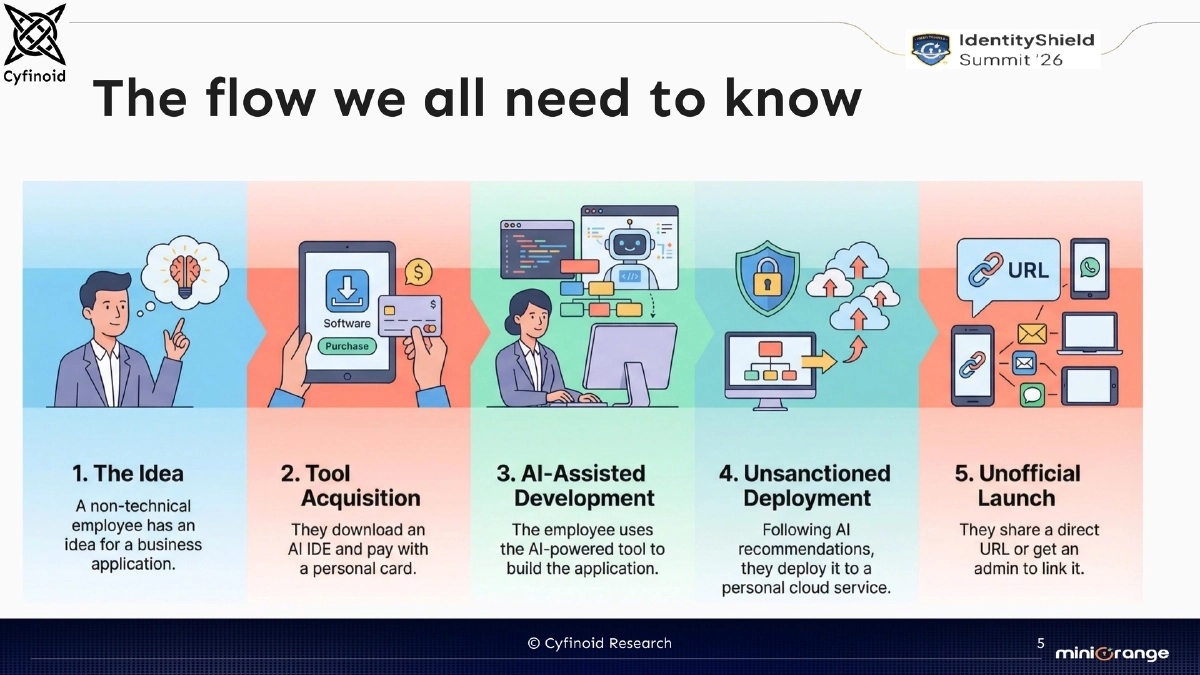

- Shadow AI encompasses unauthorized AI tools, models, and services used within an organization without formal approval or oversight

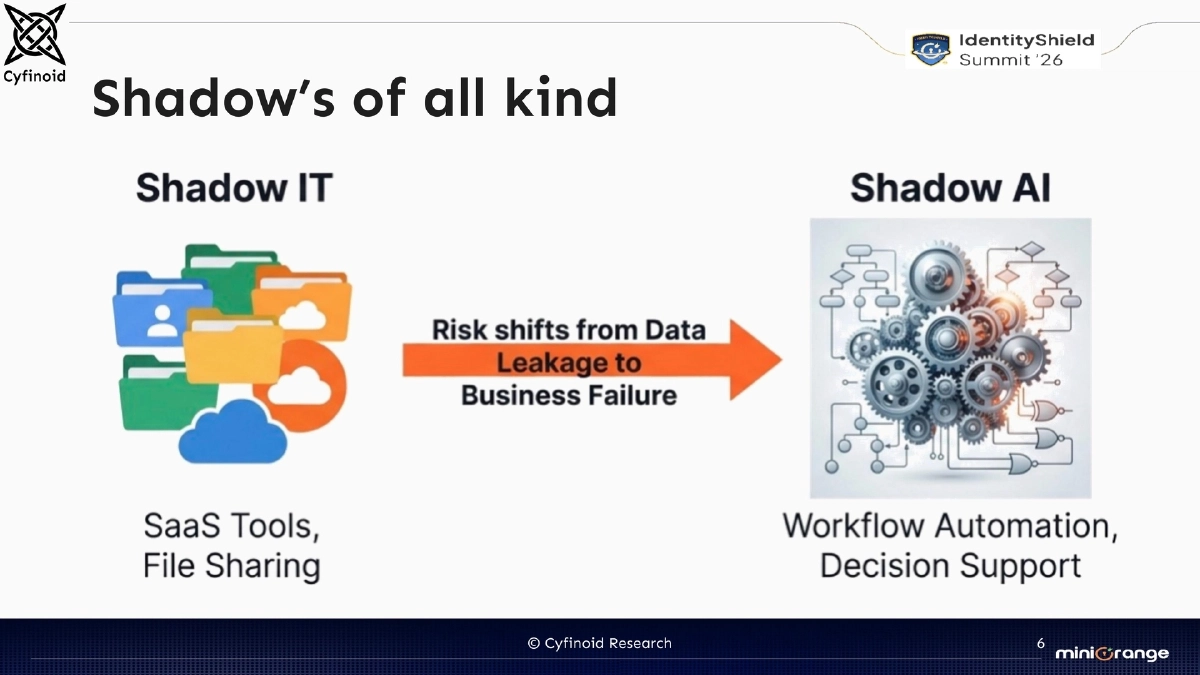

- Unlike traditional Shadow IT (unauthorized hardware or SaaS), Shadow AI introduces unique risks around data leakage to training pipelines, model hallucinations in production code, and unvetted AI-generated outputs entering the software supply chain

- The intersection of Shadow AI with identity management is particularly critical — AI agents acting on behalf of users inherit and potentially expose identity credentials

Types of Shadows:

- Shadow IT: unauthorized technology adoption by individuals or departments

- Shadow AI: unauthorized AI tool usage extending beyond traditional Shadow IT patterns

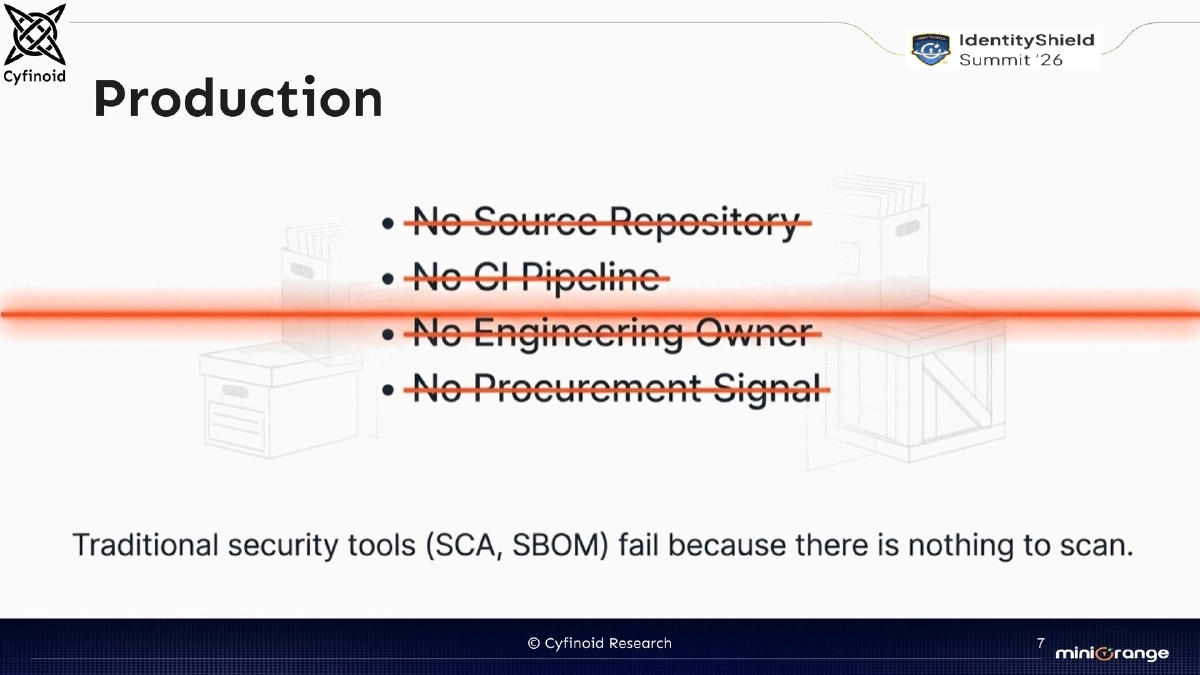

- Both converge in production environments where unauthorized tools generate code, configurations, or data that enter managed systems

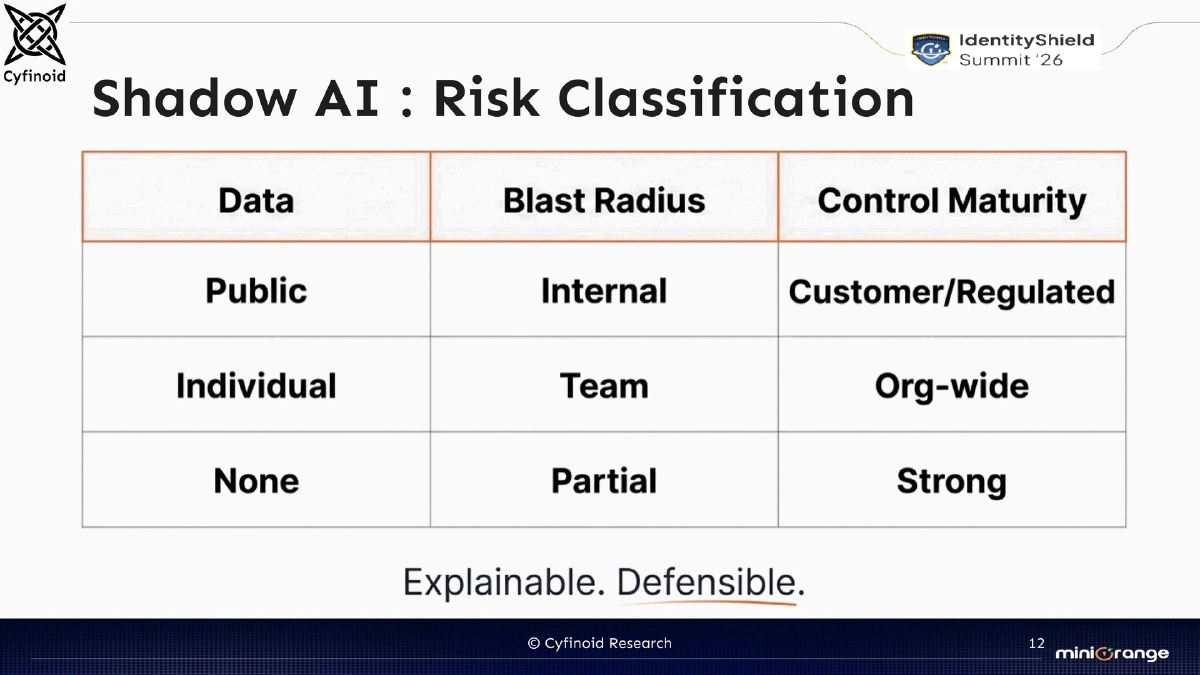

Risk Classification Framework:

- Shadow AI risks are classified across multiple dimensions depending on the type of AI tool, the data it accesses, the outputs it produces, and the degree of organizational integration

- Higher risk when AI tools have access to production data, credentials, or customer information

- Lower risk for isolated experimentation without organizational data exposure

Current Approaches and Their Limitations:

- Traditional approaches to handling Shadow IT are insufficient for Shadow AI due to the speed of adoption and the difficulty of detecting AI tool usage

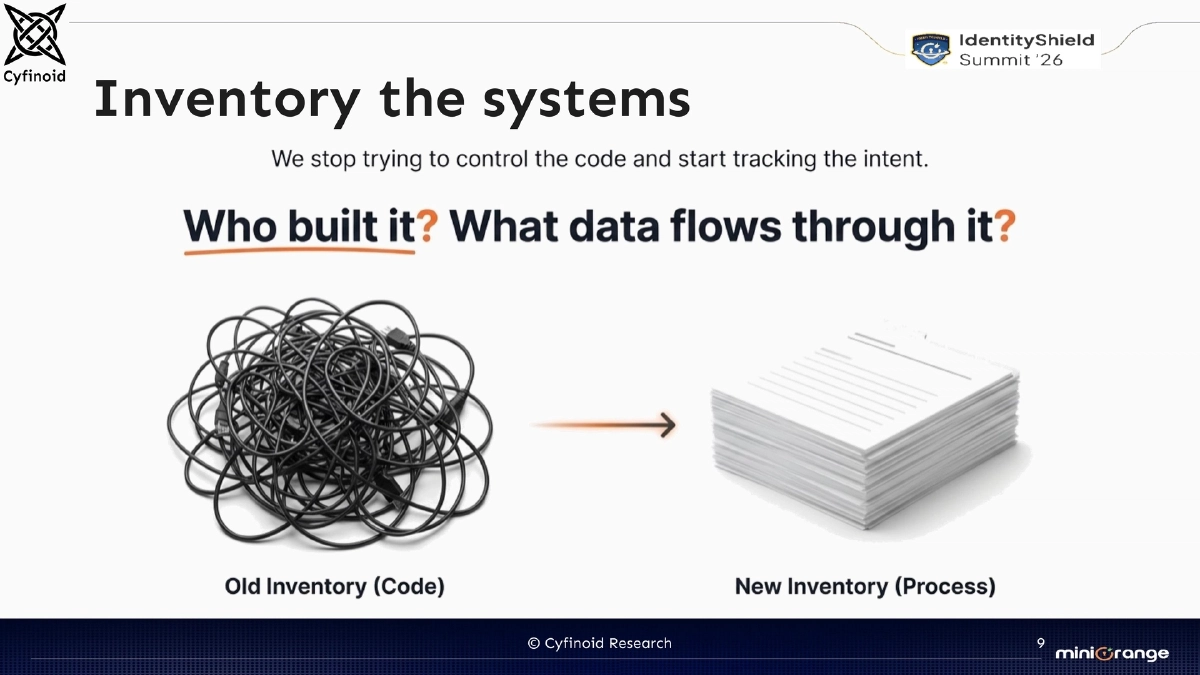

- Inventory-based approaches require new discovery angles specific to AI tool patterns

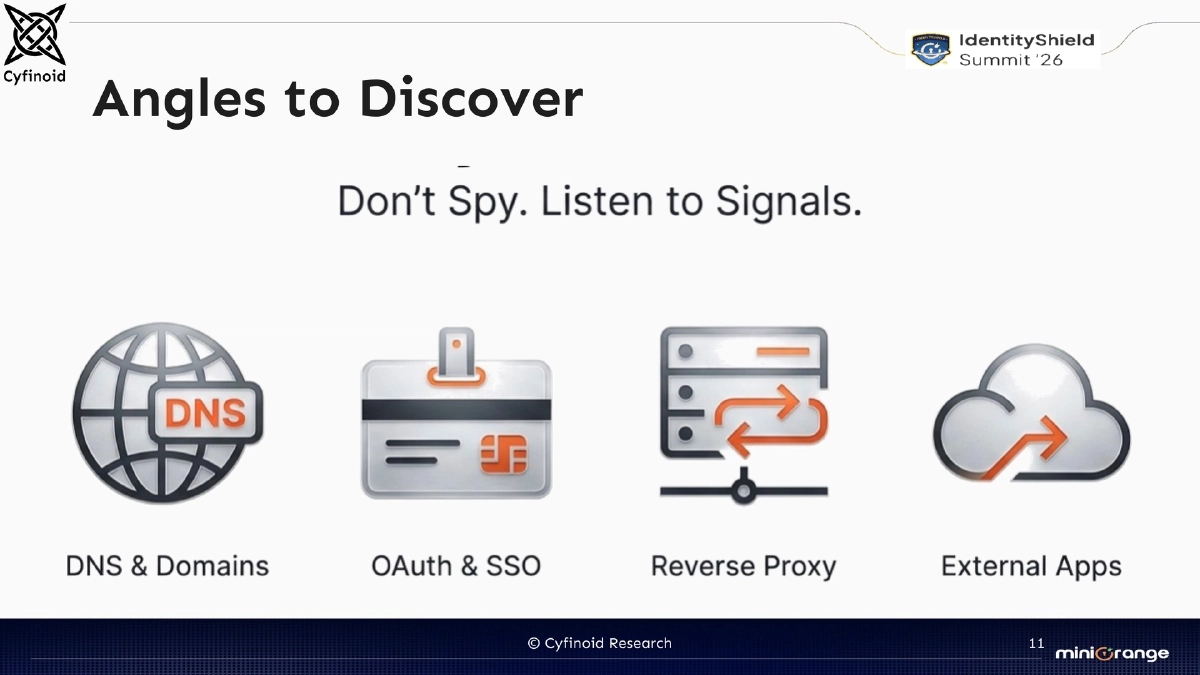

Discovery and Inventory:

- Multiple angles exist for discovering Shadow AI usage: network traffic analysis, expense report monitoring, endpoint tooling audits, and API access logs

- Building and maintaining an inventory of AI tools in use is the foundational step for managing Shadow AI risk

The Operational Loop:

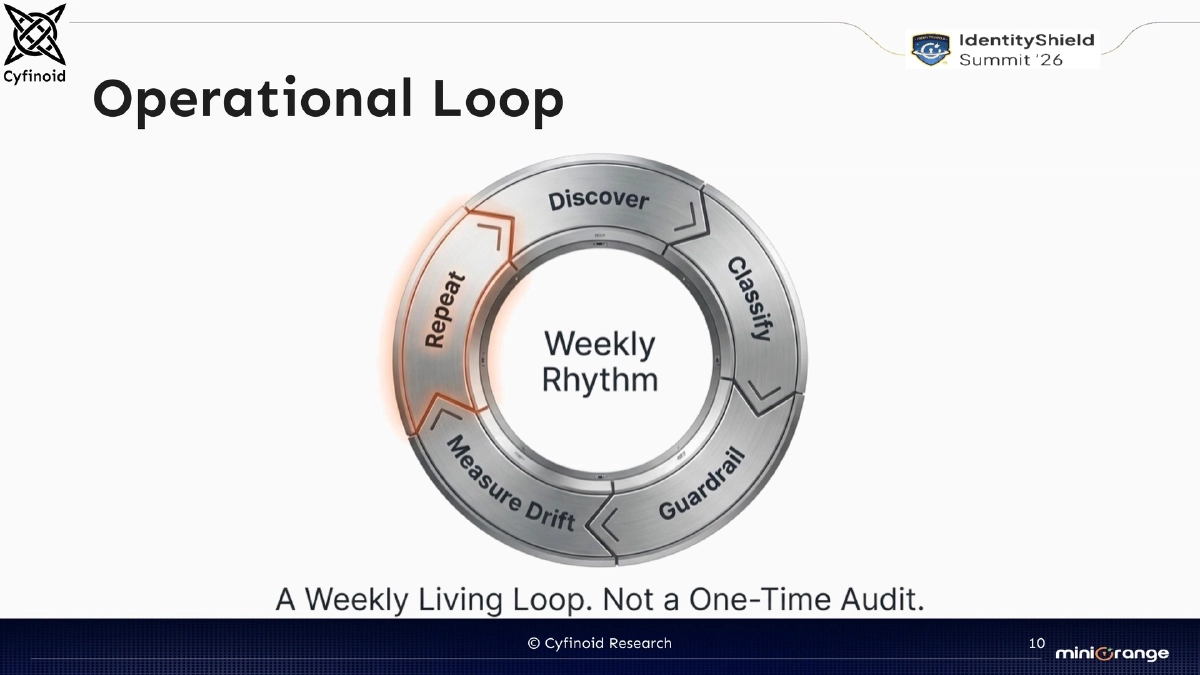

- A continuous cycle of discovery, assessment, policy enforcement, and review

- Not a one-time audit but an ongoing operational process that evolves as new AI tools emerge

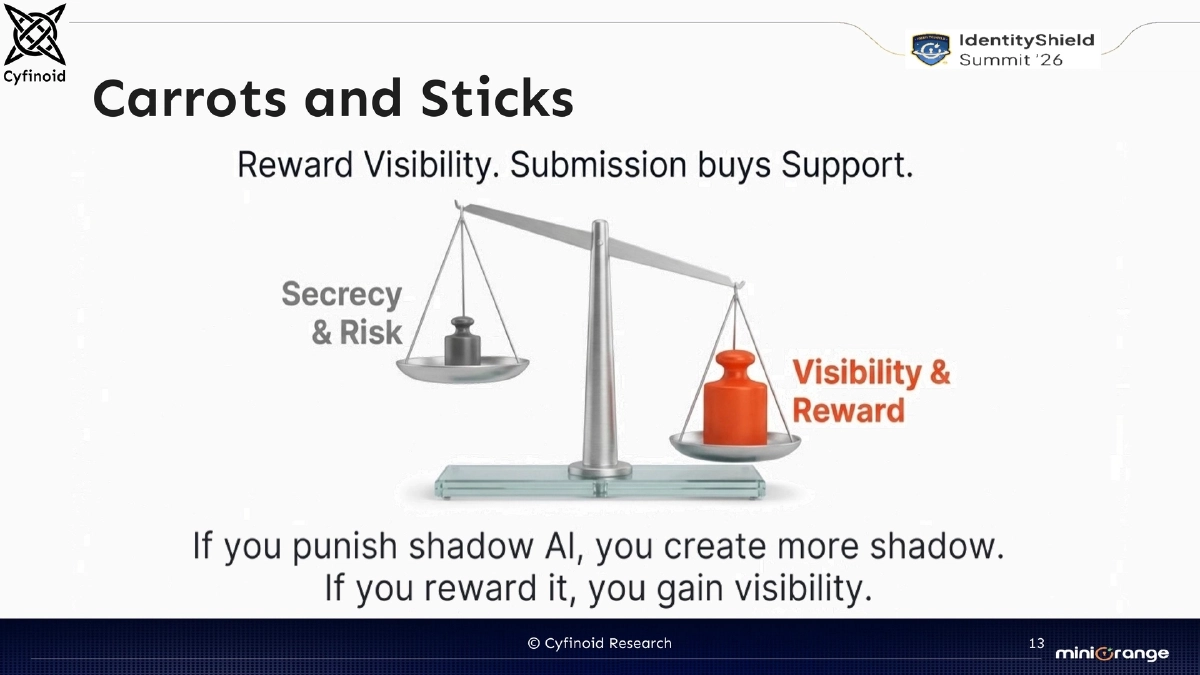

Carrots and Sticks — Balanced Governance:

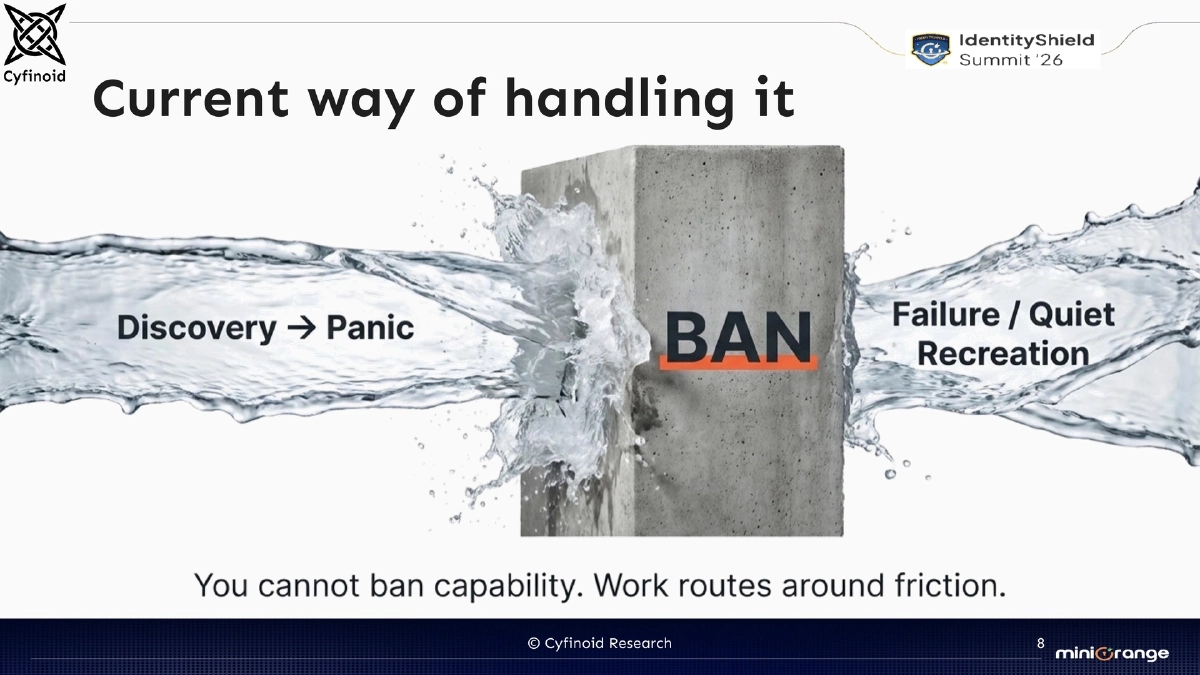

- Pure enforcement (sticks) drives Shadow AI further underground; pure enablement (carrots) without guardrails increases risk

- Effective governance combines sanctioned AI tool options (carrots) with clear policies and consequences for unauthorized usage (sticks)

- The incentive loop must align organizational productivity goals with security requirements

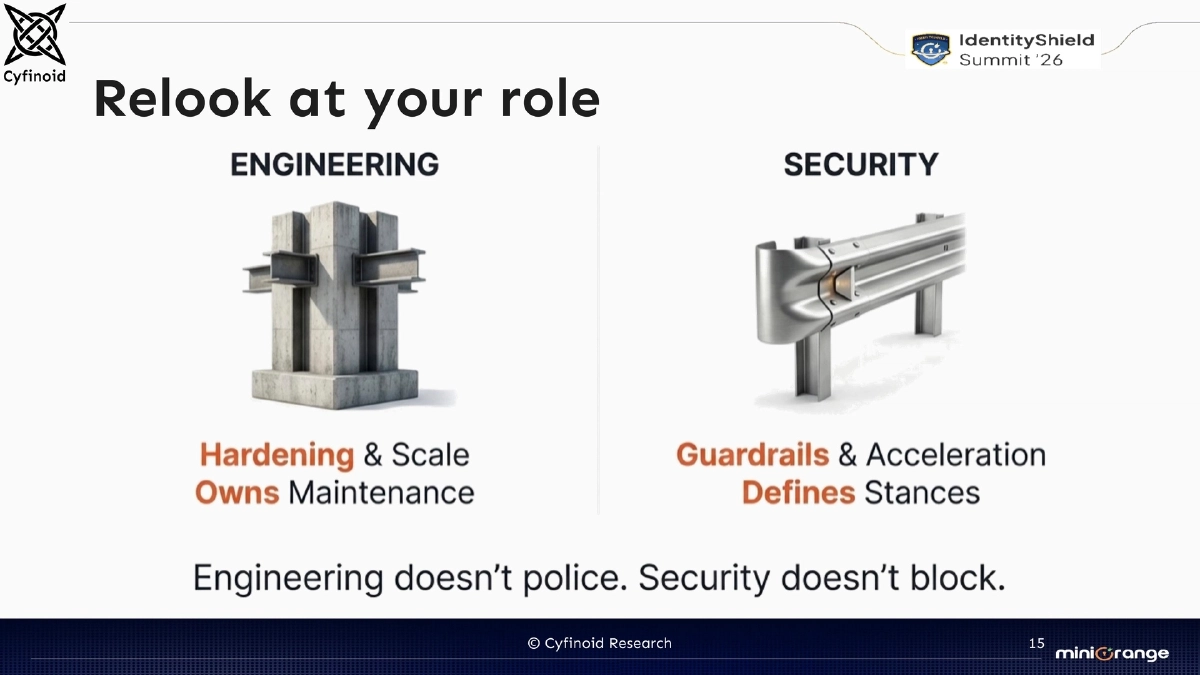

Rethinking Security Roles:

- Security practitioners need to re-examine their role in the context of AI-driven development

- The focus shifts from gatekeeping to enabling secure AI adoption while maintaining visibility and control

- Collaboration with development, business, and IT teams is essential for effective Shadow AI governance

Actionable Takeaways

- Conduct a Shadow AI inventory across your organization by analyzing network traffic, expense reports, endpoint tooling, and API access logs to identify unauthorized AI tool usage.

- Develop a Shadow AI risk classification framework that accounts for data sensitivity, credential exposure, production integration, and output trust levels.

- Implement a “carrots and sticks” governance model: provide sanctioned AI tool options with appropriate guardrails while establishing clear policies for unauthorized AI usage.

- Establish an operational loop for continuous Shadow AI management — discovery, assessment, policy enforcement, and periodic review — rather than treating it as a one-time audit.

- Redefine the security team’s role from gatekeeping to enabling secure AI adoption, collaborating with development and business teams to balance productivity with risk management.